Audio research group at Tampere University

Sounds like science

Main Research Areas

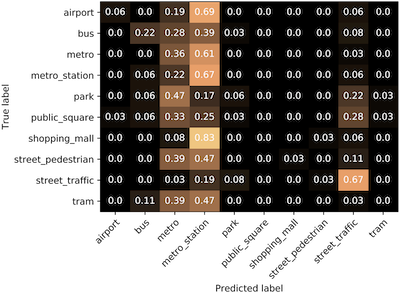

Content analysis of sounds

Content analysis of general audio is concerned with the analysis of audio for the automatic extraction of relevant information such as acoustic scene characteristics and sound sources present. Applications range from simple classification tasks for enabling context awareness, to security and surveillance systems based on detected sounds of interest, to the organization, indexing, tagging, and querying of large audio databases.

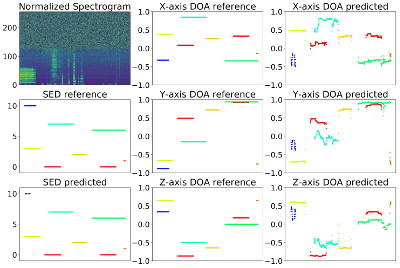

Spatial audio

Microphone arrays provide a link between the physical locations of sound objects for the computer software and can allow capturing the sound field. Applications of microphone arrays include physical location determination such as speaker localization and speaker position tracking, signal enhancement, and separation.

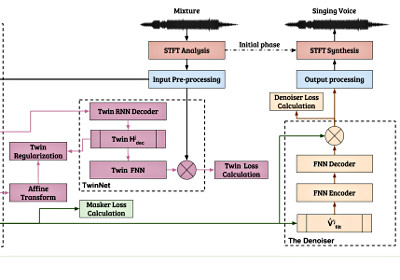

Source Separation and signal enhancement

Source separation means the tasks of estimating the signal produced by an individual sound source from a mixture signal consisting of several sources. This is a very fundamental problem in many audio signal processing tasks since the analysis and processing of isolated sources can be done with much better accuracy than the processing of mixtures of sounds.